AI as Process Infrastructure: Practical Learnings from an AI-augmented RFC Process

- 14 Jan, 2026

When the RFC (Request for Comments) process was first introduced to our engineering organization, it was highly successful. Engineers appreciated its transparency, the democratized technical decision-making it enabled, and its non-disruptive, asynchronous nature. The process was used effectively to drive a handful of critical, high-blast-radius decisions across the company.

Nearly 18 months later, the process was still valuable, but cracks started to show. While the first few RFCs were processed like a well-oiled machine, nearly 60% of the ~20 RFCs created in the last nine months remained open with no updates for more than six weeks.

I analyzed our RFC list and made a few observations:

- Visibility bias: RFCs with high visibility received attention from all participants, leading to decisive, high-quality discussions and fast resolutions. Conversely, conversations in other RFCs dragged on, waiting for days or weeks between responses, if not abandoned entirely.

- Hesitation to commit: In many cases, reviewers hesitated to mark the RFC as “approved” even after the discussion had converged on a final solution.

- Stale documentation: Several RFCs had reached a conclusion, and the author acted on them but did not update the document to reflect the final state.

Our first instinct was to strengthen the process by introducing a biweekly RFC review meeting and assigning an engineering manager to act as an RFC process owner/facilitator. The tradeoffs were clear: we would ensure the process continued to work effectively and increase participation, but at the cost of management overhead and yet another expensive, large-group recurring meeting that disrupted engineers’ focus. Given the strategic value of RFCs, we thought the tradeoff might be worth it.

Fortunately, there was a new force multiplier in town. We had access to LLMs, and we decided we could leverage them to streamline and level up the process without adding more meetings.

The AI Process Coordinator

Given the right tooling and conditions, an LLM can be a surprisingly competent process coordinator and facilitator. Recognizing LLM’s capabilities and limitations, our goal was not to have the AI judge technical decisions, but to facilitate visibility, timeliness, and closure.

We implemented a simple app that runs daily, collects all open RFCs from Confluence and passes them one by one to the OpenAI API, asking it to analyze the document and answer predefined questions such as:

- Progress: Is the RFC on schedule based on its stated milestones?

- Engagement: Are there unaddressed comments?

- Action Items: Are there pending next steps for the author or reviewers?

- Are there questions waiting to be answered for more than 3 days?

- Should the author change the RFC status? (ex: to move it to next stage)

- Are all questions answered but one or more reviewers didn’t sign off?

- Document quality: Does the RFC meet our quality standards?

- Escalation: Should the RFC be flagged for management intervention?

For each of those questions, our code would act on the LLM’s structured answer by sending Slack reminders to the relevant people and visualizing the results in our RFC Dashboard.

Note: We utilized our GDPR-compliant OpenAI Enterprise agreement which provides data security and privacy guarantees, ensuring our internal technical strategies remained confidential.

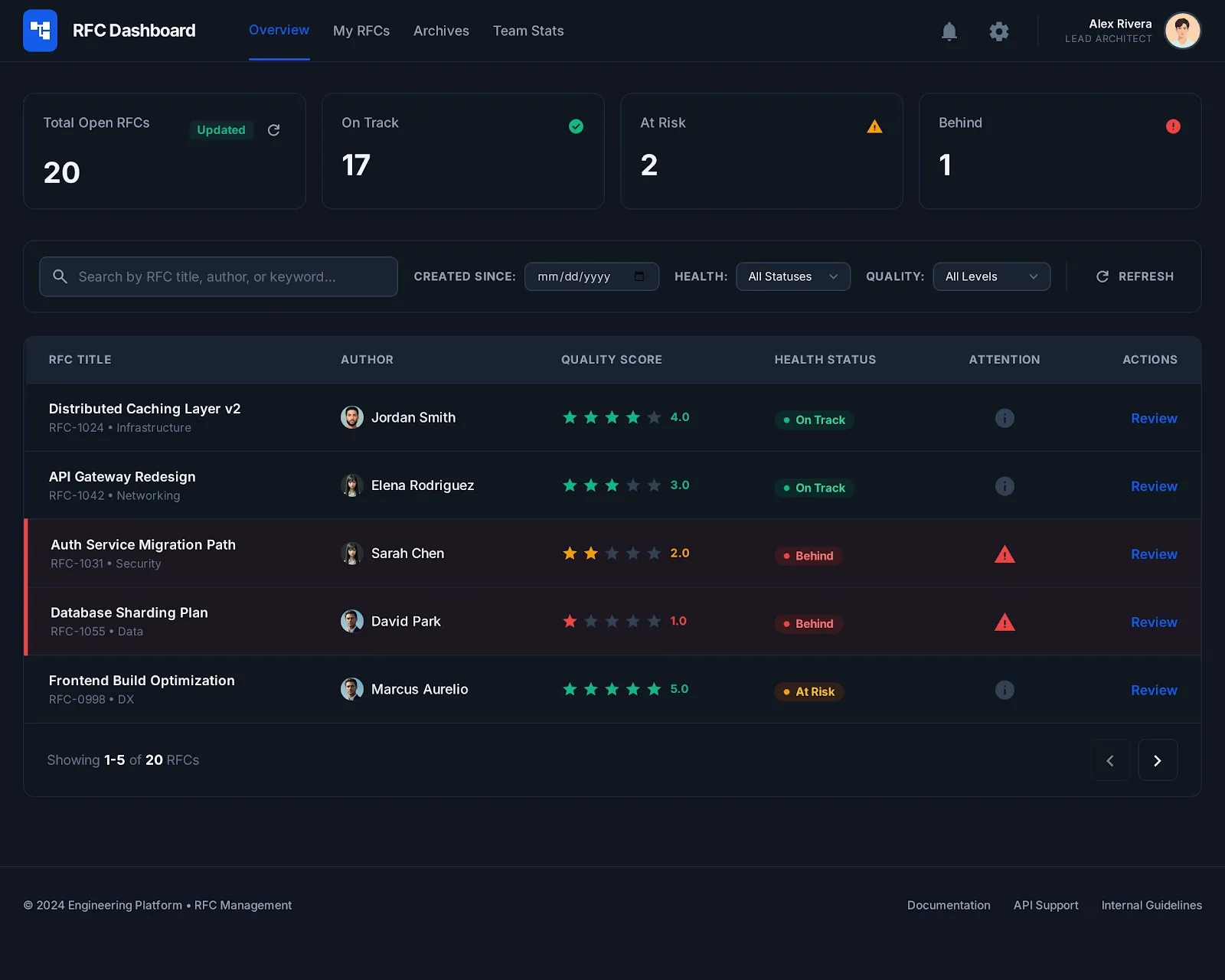

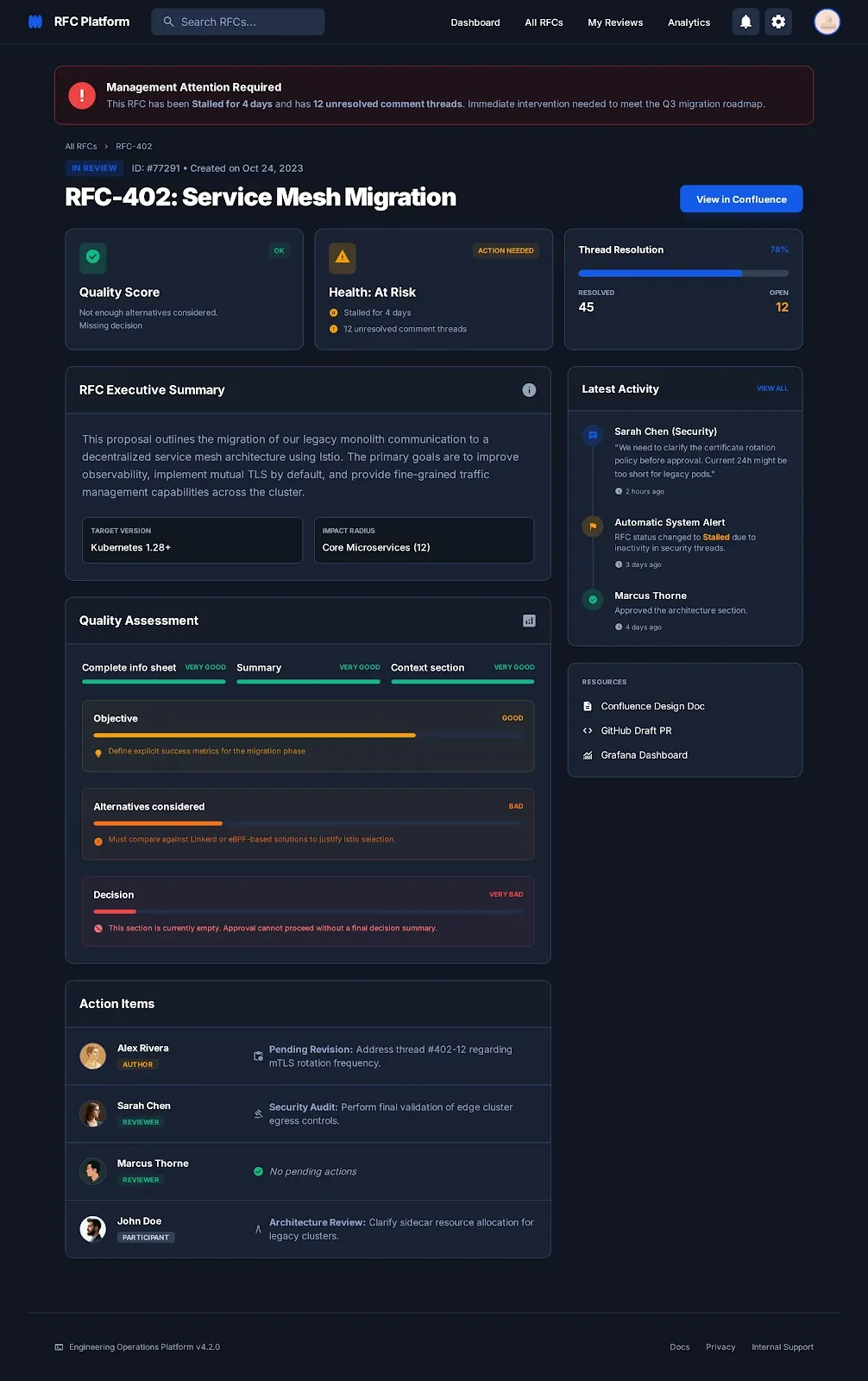

I can’t share real system screenshots, but this AI-generated mockup can give you a clear picture of the management dashboard.

Results

The results were impressive. The reminders effectively placed RFC activities back into the participants’ schedules. We set the tool to send reminders at 7:00 AM, allowing engineers to see them at the start of their day and act on them before entering deep focus mode.

-

We were able to resolve the backlog of stalled RFCs within four weeks by progressing them to completion or clearly updating and marking the abandoned ones as closed.

-

All new RFCs are currently on track. Since deployment, only two RFCs required deadline extensions, which were surfaced and approved early thanks to the system’s governance.

The dashboard also provided a “single pane of glass” view where managers can easily gauge where their support or intervention is needed.

Practical Advice

If you are considering a similar approach, here is what worked for us:

1. Have a clear workflow. We defined a workflow with distinct stages and responsibilities and “educated” the AI on the expectations of each stage. This allowed the AI to accurately identify if action items were missing. A well defined process is table stakes to enable team members to participate effectively, and doubly so for smooth AI facilitation.

2. Use a simple, semi-structured format. Our RFC template starts with a simple table where the author must fill in key info, such as reviewers and dates for main milestones. This allows the AI to easily extract the data it needs to answer our questions without risk of hallucination or mix-ups.

One thing that worked really well for us was using emojis (📝💬✅❌🚀) in the RFC titles to indicate their state. In addition to being easier for human readers to parse on the RFCs list page in Confluence, it also made filtering the RFCs (slightly) easier for the tool.

3. AI needs guardrails. Theoretically, similar results could’ve been achieved by giving an AI agent MCP/tool access to Confluence, Slack and a database, and writing a robust prompt. In practice though, AI loses 39% accuracy when given 6 tasks, and AI cognitive overload is a real issue. The AI-in-the-loop approach described here leverages LLM’s abilitiy to analyze and assess natural text, while providing it with the structure it lacks.

4. Keep a sharp focus. We debated whether the tool should leverage AI to enrich the document content or answer comments, but we opted to focus strictly on process governance and facilitation. We trust our engineers to leverage AI for writing and responding to comments when it makes sense, and to verify its input.

5. Define “Quality” for the AI. Don’t ask the AI to just “evaluate the quality of the RFC.” Instead, give it clear criteria and examples. We instructed the model to assess the quality of the document structure and the discussion health on a scale from “Very Bad” to “Very Good.” While we don’t trust it blindly to assess engineering correctness, we found it highly reliable for structural checks:

- Were enough alternatives considered?

- Is the problem definition clear?

- Are the pros and cons listed properly?

- Are there contradictions in the proposed solution?

- Are there unanswered questions in the comments?

6. This is a blueprint. This pattern can - and should - be used in different processes across the organization. Think of it as a blueprint for using LLM abilities and constraints effectively to level up processes. We have also used this pattern to improve our incident management and postmortem process.

Conclusion

The key lesson here is that AI-in-the-loop architecture can be really powerful when used approperiately.

With current capabilities and limitation, LLMs should not replace human judgment or technical decision-making. They are also poorly suited for executing deterministic business rules, which are better handled by traditional code. LLMs excel in reasoning over unstructured information and identifying communication gaps, which can be used to augment almost all business processes.

We are already applying this same blueprint to other domains, proving that with the right guardrails, AI can be the ultimate process facilitator for any business workflow.